So when I was asked to do up the specs for our new DR server I though about using a cloud 2 hosting provider who could give us storage and only pay for CPU and RAM when we needed it. It turns a huge capital expenditure into a small operational expenditure that is only paid in the event of a disaster (or during DR testing). This is where being able to purchase compute power on demand and separate from storage absolutely shines.

But for a small to medium sized organisation that's a huge upfront cost that I think can be avoided. Now I'm not saying it was a complete waste of money, had there been a disaster it would have been worth every cent. It cost almost as much as our production environment and sat there not doing much 1 for five years before being decommissioned.

#Veeam backup to aws s3 bucket plus#

I can't remember the exact price of the server but it was in the tens of thousands of dollars plus the ongoing cost of hosting it in a data center somewhere. Unfortunately a DR solution can be costly, our previous strategy we bought a server with enough hard disk space, RAM and CPU grunt to hold our off site backups and to spin up all our VMs to replicate our production environment in the event of a disaster.

#Veeam backup to aws s3 bucket full#

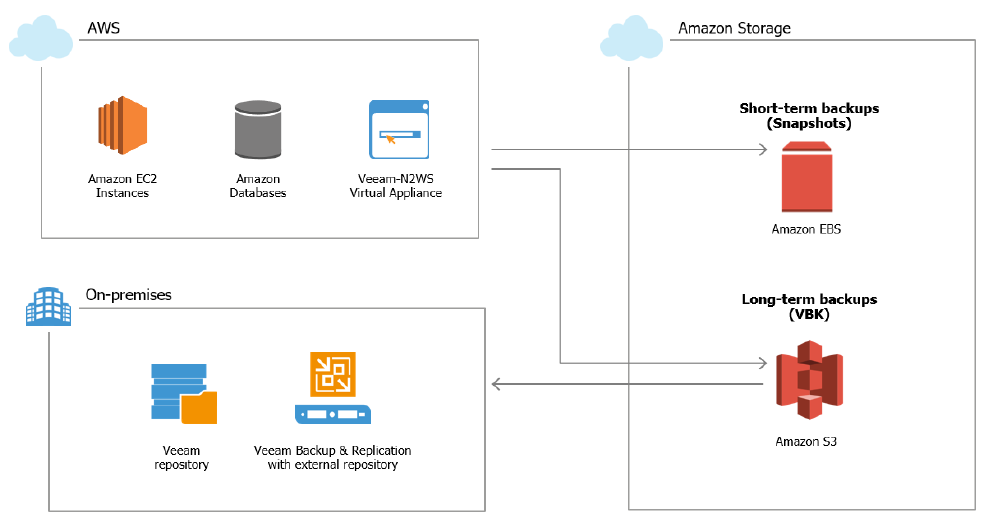

But backing up the data is only part of the picture, you also need to think about how you are going to restore it for a full disaster recovery (DR) plan.

Hopefully there are not too many of the second group. Are there any specific ways to do this? Keep getting the "Failed to get disk space error" and all the troubleshooting I have found for that doesn't work.There is an old saying that "there are two types of sysadmins, those that do make backups and those that will make backups". This means I won't actually be able to remove the SMB gateway, but that's just my use case.Įxcuse my ignorance, and for bumping an old thread but having issues adding the gateway as a repository. I use two S3 gateways, one is all NFS mounts for system level (no credentials, IP whitelist) robocopy file backups to buckets, the other is SMB mounts of the same buckets for AD user authenticated access. And it's reasonably simpler to have it all on one place. Not a huge difference but not bad either. So far the only real advantage I can see is that I don't have to supply a gateway with it's own allocated RAM. NAS3 was a gateway cache, now it's essentially a cache for the scale out capacity tier. It's essentially the exact same packets to the same devices just different containers. I'll check back in a week after it's got something to backup. So hopefully after the Secondary Copy completes, the system will start uploading to S3. Secondary Copy Job to Scale out Repository (NAS3 + S3), runs every 7 days, with weekly GFS and copy full from source enabled (this essentially turns the performance tier into just a cache) Scale out Repository for NAS3 with Capacity Tier in S3, with Move backups older than 0 days set. Secondary Copy Job to AWS Gateway with cache on NAS3, runs every 7 days, with weekly GFS, and copy full from source enabled (this prevents injecting increments which incurs S3 read costs)īasic Repositories on NAS1,NAS2.

Primary Copy Job to NAS2 runs continuously Ok so I tried to set it up to achieve the same thing.īasic Repositories on NAS1,NAS2. This way, all sealed backups older than 7 days will be automatically transferred to object storage. You can achieve pretty much the same thing by adding capacity tier to SOBR and setting operational restore window to 7 days. I will be testing the new option though just to see how performance is I wanted my latest weekly chain in the cloud, recovering 1 month ago or later in a disaster isn't worth it for my setup I can see the reason it's scale out only is you don't want to send backup data directly to s3, the higher teirs probably act like the gateway's cache, giving the system a place to read from that is not a live VM snapshot. The new option to use it for scale-out capacity is a nice option between my solution and VTL. I found the best thing to do is a Backup Copy job with weekly GFS enabled and the "copy from source not increments" option enabledĮvery week it does a full copy of the latest chain to the cache, then uploads it async, it goes slow but it seems to work great, been able to pull them back down to restore when needed without much trouble I set the repository to use individual files for each VM You need a virtual environment (VMware / Hyper-V) where you can deploy an S3 SMB Gateway VM with a large cache (bigger than one full job)Īdd it as a repository, then just point a job at it. If anyone wants to backup directly to S3 you can in an unsupported way